Gallery

Safety Values Legend

Safety Value Prediction: Trajectories colored by predicted value comparing Koopman Frozen to ground truth values

Control Actions Legend

Control actions visualisation: Control interventions shown with magenta arrows

Safety Values Legend

Safety Value Prediction: Trajectories colored by predicted value comparing Koopman Frozen to ground truth values

Control Actions Legend

Control actions visualisation: Control interventions shown with magenta arrows

Safety Values Legend

Safety Value Prediction: Trajectories colored by predicted value comparing Koopman Frozen to ground truth values

Control Actions Legend

Control actions visualisation: Control interventions shown with magenta arrows

Results

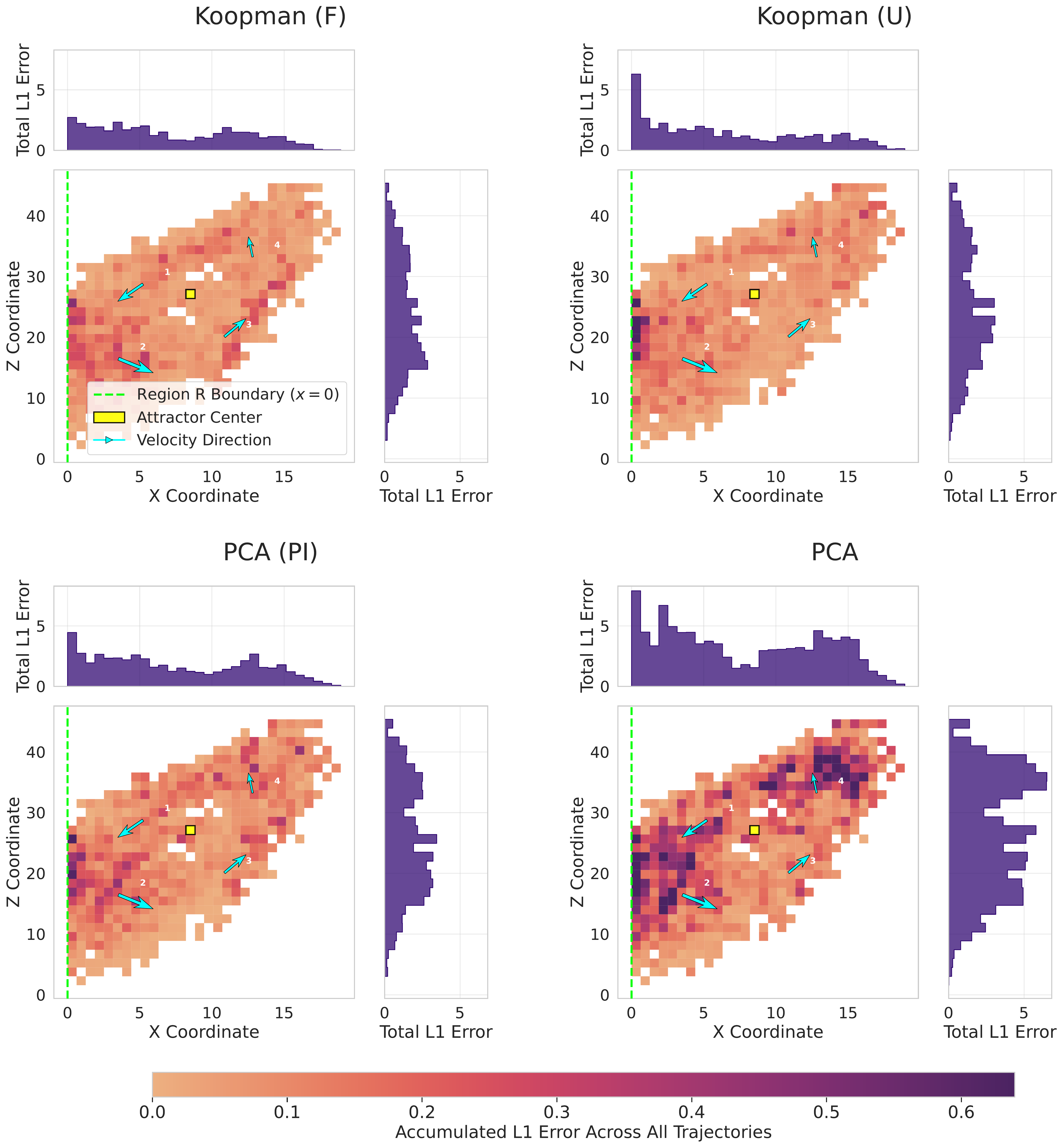

Below in the table, we can see our results, where our Koopman frozen model performed best across MSE, MAE and R² metrics. This performance remains consistent whether the transformer backbone is frozen or not. The Error Accumulation charts reveal that Koopman frozen also produces fewer concentrated errors compared to the PCA baseline, which, despite low MSE scores, shows considerable dark spots indicating high sensitivity at unstable regions of the Lorenz system.

The previous animations demonstrate safety value predictions from our frozen Koopman-transformer model against ground truth values computed via a recursive algorithm. To illustrate the practical utility of these predictions, we implement a simple controller that applies interventions when trajectories approach or exit region Q, using predicted safety values to determine when and how much control is needed.

| Model | MSE (×10⁻⁴) | MAE (×10⁻²) | R² |

|---|---|---|---|

| Koopman Frozen | 3.08 ± 6.55 | 1.16 ± 0.53 | 0.991 ± 0.089 |

| Koopman Unfrozen | 5.59 ± 17.14 | 1.20 ± 0.59 | 0.989 ± 0.089 |

| PCA Physics-Informed | 5.28 ± 8.83 | 1.48 ± 0.65 | 0.989 ± 0.090 |

| PCA | 16.80 ± 17.93 | 2.83 ± 0.98 | 0.983 ± 0.091 |

Safety function prediction performance across models mean (μ) ± standard deviations (σ). All metrics were computed on 252 test trajectories within the safety region.

Comparative accumulated L1 error across the test dataset. L1 error is defined as L₁ = |y_true - y_pred|. The figure displays the X–Z projection for four models. Light regions indicate low error; darker regions represent higher error concentrations. Contextual markers denote the boundary of the training region Q (x=0), corresponding to the Lorenz attractor's equilibrium point. Vector lines show mean velocity and direction in each quadrant.